Automation is a Force Multiplier

Posted by ieDaddy | Aug 23, 2017 | devOps, Technology | 0

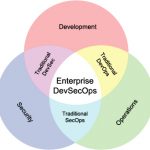

DevOps – Job Title, Tools Or Process?

Posted by ieDaddy | Mar 23, 2017 | devOps, Technology | 0

All

LatestIs There Such a Thing As Good Technical Debt?

I like the term “Technical Debt” because it is an easy metaphor for the average business owner to...

All

Top RatedSign In As A Different User In SharePoint

by ieDaddy | Nov 23, 2014 | SharePoint | 0

I’ve seen or searched for this about a million times so far, so why not write up a quick...

All

PopularSharePoint 2010-Change Survey Option “Specify your own value:” to “Other:”

by ieDaddy | May 14, 2012 | SharePoint | 22

Recently I had a request from a business unit to change the Out of Box wording on a survey that...

SharePoint 2010–SQL View to get User Profile Property Bag Values

by ieDaddy | Mar 5, 2012 | SharePoint | 8

Wrong Domain Name in AccountName after User Profile Sync

by ieDaddy | Jan 23, 2012 | SharePoint | 8

Sustainable Living, Sustainable Resources

LatestAnnual Greening and Cleaning of the Garage

by ieDaddy | Dec 22, 2017 | Sustainable Living | 1

As we start to roll into the New Year, it’s time for the annual cleaning and greening of the...

Why Doesn’t Your City Have Curbside Composting?

by ieDaddy | Sep 12, 2012 | Composting | 0

Free Kindle books for the Homeschooler

by ieDaddy | Aug 24, 2012 | Home Schooling | 2

Wash Your Organic Produce. No, Really.

by ieDaddy | Aug 22, 2012 | Organic Living | 0

The Work-Life Balance Myth

by ieDaddy | Aug 21, 2012 | Continuous Learning | 1

Is There Such a Thing As Good Technical Debt?

I like the term “Technical Debt” because it is an easy metaphor for the average business owner to...

Read MoreFeature Flags as a Continuous Delivery Release Tool

“Are feature flags better for risk mitigation, fast feedback, hypothesis-driven development or...

Read More

Follow me on Twitter

My Tweets

Categories

- Cooking (11)

- Barbeque (5)

- Pressure Cooker (1)

- devOps (21)

- Family (5)

- Microsoft Certification (2)

- Personal (5)

- Organization (1)

- Sustainable Living (58)

- Backyard Farming (14)

- Camping (2)

- Composting (4)

- Continuous Learning (13)

- Gardening (8)

- Home Schooling (4)

- Organic Living (18)

- Recycling (2)

- Sustainable Resources (21)

- Technology (282)

- .NET (9)

- Active Directory (6)

- Consulting (2)

- CSS (2)

- Geek Fu (23)

- HTML5 (3)

- IIS (2)

- InfoPath (2)

- JavaScript (3)

- Microsoft (3)

- Microsoft Office (3)

- PowerShell (31)

- Search (4)

- SharePoint (201)

- SQL Server (7)

- Team Foundation Server (6)

- Training (8)

- User Groups (5)

- Visual Studio (4)

- Windows (6)

- Windows Server 2012 (3)

- Uncategorized (9)

- Writing (3)